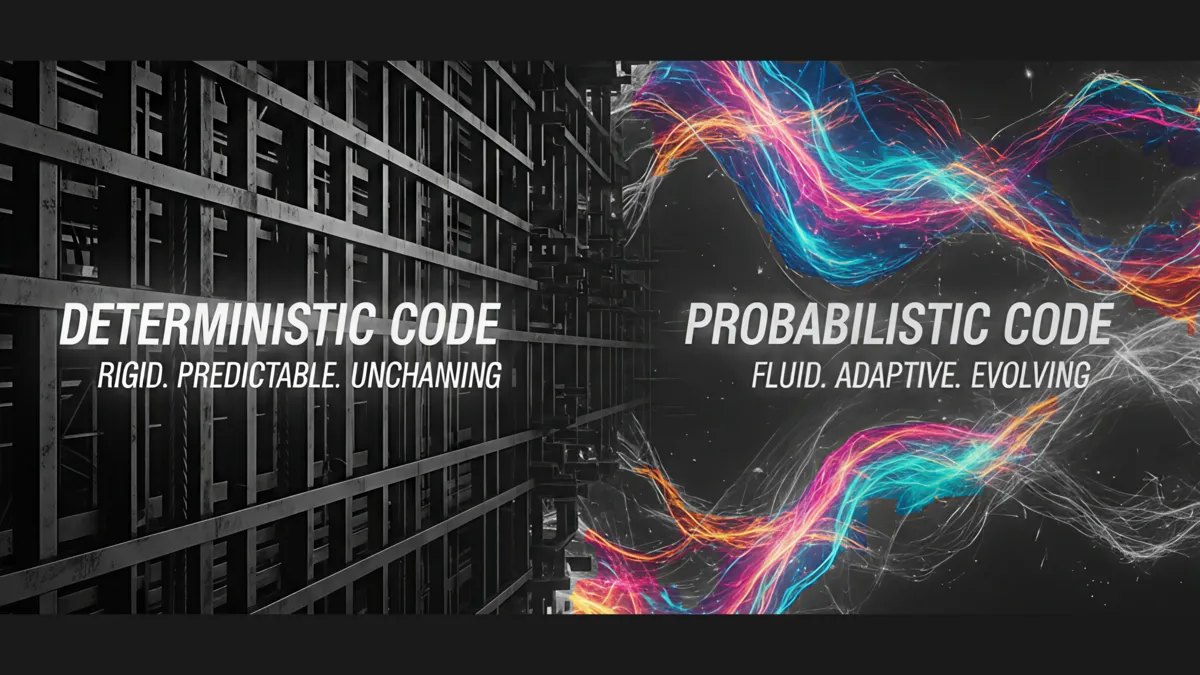

Why Your AI Needs Rules: Bridging the Gap Between Deterministic and Probabilistic Reasoning

The future of software isn't just AI. It's hybrid architecture. Here is the essential guide for developers and architects navigating the shift from rigid code to fluid patterns.

We are currently living through the single biggest shift in software architecture since the advent of the internet.

For decades, software engineering was built on a foundation of absolute certainty. We wrote code that followed explicit rules: If X happens, then do Y. It was comforting, testable, and reliable.

Then came Generative AI.

Suddenly, we are building applications on top of models that don't follow rigid rules. They deal in likelihoods, best guesses, and statistical weights. For many traditional engineers and enterprise architects, this shift from certainty to uncertainty is terrifying.

To build the next generation of reliable autonomous agents and complex simulators, we have to stop viewing these two approaches as opposing forces. We need to understand them as distinct tools in a modern toolbox — and that starts with understanding why most AI projects fail before the technology is ever the problem.

This is the definitive breakdown of Deterministic vs. Probabilistic reasoning in application development.

The Comfort Zone: Deterministic Reasoning

Deterministic systems are the bedrock of traditional computing. A deterministic algorithm is a process that, given a particular input, will always produce the exact same output, with the underlying machine passing through the same sequence of states.

Think of it as a train on a track. It has one destination and one route to get there.

The hallmark: 100% predictability.

Real-world example: A banking transaction system. If you transfer $100 from Account A to Account B, the code must deduct exactly $100 from one and add exactly $100 to the other. There is no room for "maybe."

This is why industries like finance, healthcare, and critical infrastructure have spent decades building systems on deterministic foundations — and why frameworks like NIST's AI Risk Management Framework emphasize the importance of reliability and predictability as core AI system properties.

The New Frontier: Probabilistic Reasoning

Probabilistic systems, including modern Large Language Models (LLMs), operate on uncertainty. They don't follow explicit if/then rules written by a human. Instead, they learn patterns from massive datasets and make predictions based on statistical likelihood.

Think of it as an off-road vehicle navigating unknown terrain. It assesses the environment and chooses the path that looks most likely to succeed based on past experience.

The hallmark: Adaptive, creative, but inherently uncertain.

Real-world example: A generative AI chatbot. When you ask it a question, it isn't looking up a pre-written answer. It is calculating, token by token, what the most statistically probable next word should be.

Research from Stanford's Human-Centered AI Institute has documented how this probabilistic nature creates both extraordinary capability and extraordinary risk — models can produce remarkably insightful outputs one moment and confidently fabricated ones the next.

The Architect's Cheat Sheet: A Comparison

For architects designing modern systems, knowing when to use which approach is critical. We've broken down the core differences into this framework:

| Concept | Deterministic Reasoning | Probabilistic Reasoning |

|---|---|---|

| Core Logic | Rigid rules (explicit code) | Learned patterns (training data) |

| Nature of Output | Binary (true/false, pass/fail) | Distribution (confidence score from 0.0 to 1.0) |

| Primary Failure Mode | Logic error — the application crashes or throws an exception | Hallucination — the model is confidently wrong without knowing it |

| Best Applications | Compliance, financial math, security audit trails | Natural language understanding, computer vision, creative intuition |

This is the essential tension: deterministic systems fail loudly and predictably, while probabilistic systems fail quietly and unpredictably. Both failure modes are dangerous, but only one announces itself.

Why This Matters Now: The Agentic Shift

Why does this distinction matter right now? Because we are moving past simple chatbots and into the era of AI agents — systems capable of taking autonomous action to achieve goals.

If you rely solely on deterministic code, your agent will be brittle. It will fail the moment it encounters a scenario you didn't explicitly code for.

If you rely solely on probabilistic models, your agent will be untrustworthy. It might brilliantly solve a complex problem one minute, and then confidently "hallucinate" a disastrous financial transaction the next.

McKinsey's research on the state of AI adoption confirms what practitioners already know: organizations that treat AI as a technology bolt-on rather than an architectural decision consistently underperform. This is the same pattern we see in AI pilots that succeed but never scale — the technology works in isolation, but the architecture wasn't designed for production.

The Future is Hybrid: Deterministic Guardrails Around Probabilistic Cores

The winning architecture for 2026 and beyond is hybrid.

We must learn to build deterministic guardrails around probabilistic cores. We need to use rigid code to handle security, compliance, and final execution boundaries, while allowing probabilistic models to handle the messy, intuitive work of understanding intent and strategizing within those boundaries.

Here's what this looks like in practice:

-

Input validation layer (deterministic): Sanitize and validate all inputs before they reach the model. Enforce schema constraints, rate limits, and access controls with traditional code.

-

Reasoning layer (probabilistic): Let the LLM or ML model do what it does best — interpret ambiguous input, generate creative solutions, and handle edge cases that would require thousands of if/then rules.

-

Output guardrail layer (deterministic): Before any AI-generated output reaches a user or triggers an action, pass it through deterministic checks. Does it comply with your business rules? Does it fall within acceptable ranges? Does it match required output formats?

-

Execution layer (deterministic): Final actions — database writes, API calls, financial transactions, customer-facing messages — should always flow through deterministic code that enforces business logic.

This is not a theoretical ideal. It is the same hybrid pattern that organizations following a sound AI governance framework already implement: clear rules about what AI can and cannot do autonomously, with human-in-the-loop checkpoints at high-risk decision points.

Practical Guidance: Running a Proof of Concept

If you're planning to run an AI proof of concept, the deterministic/probabilistic distinction should shape your design from day one:

-

Define success criteria deterministically. Don't measure your POC by whether the AI "feels" smart. Measure it against explicit, binary pass/fail metrics that your business stakeholders agreed to upfront.

-

Instrument your guardrails first. Before you integrate any LLM, build the deterministic boundary — logging, validation, fallback paths. You want to know exactly when and how the probabilistic layer fails.

-

Budget for uncertainty. Probabilistic systems require ongoing monitoring in ways that deterministic systems don't. Budget for evaluation pipelines, drift detection, and regular prompt/model tuning.

MIT's work on AI system reliability reinforces this point: the organizations that succeed with AI are the ones that plan for its failure modes from the start, not the ones that discover them in production.

Building your AI governance framework? Our AI Governance service helps you manage risk while enabling innovation.

The Architect's Mandate

The future belongs to the architects who can wield both tools effectively.

This is not about choosing sides. It is not about deterministic versus probabilistic. It is about knowing which tool to reach for — and more importantly, knowing where one ends and the other begins.

The organizations that get this right will build AI systems that are both powerful and trustworthy. The ones that don't will either ship brittle systems that break at the first unexpected input, or ship unreliable systems that erode trust the first time they hallucinate something that matters.

Build the guardrails. Then let the AI run.

Ready to Put This Into Practice?

Take our free 5-minute assessment to see where your organization stands, or talk to us about your situation.

Not ready to talk? Stay in the loop.

Get AI strategy insights for mid-market leaders — no spam, unsubscribe anytime.

Related Posts

View all posts