Building accountability

Agents don't remember — they reconstruct. Context windows reset. Delegation loses the original criteria. We build the infrastructure that tracks what was agreed, what was delivered, and whether it was accepted.

Builders of AGLedger — accountability infrastructure for AI agents. Patent pending.

Your logging wasn't built for this

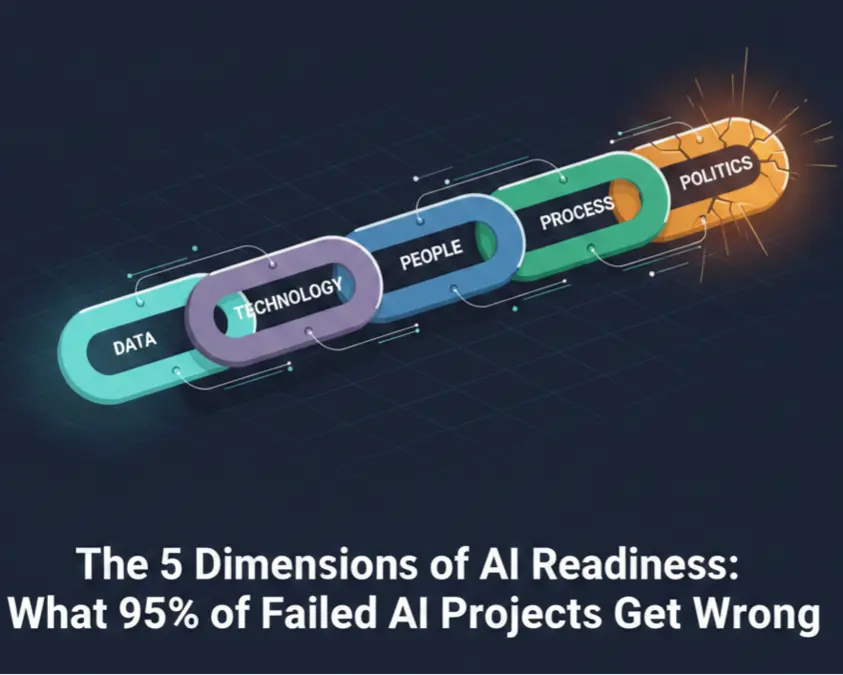

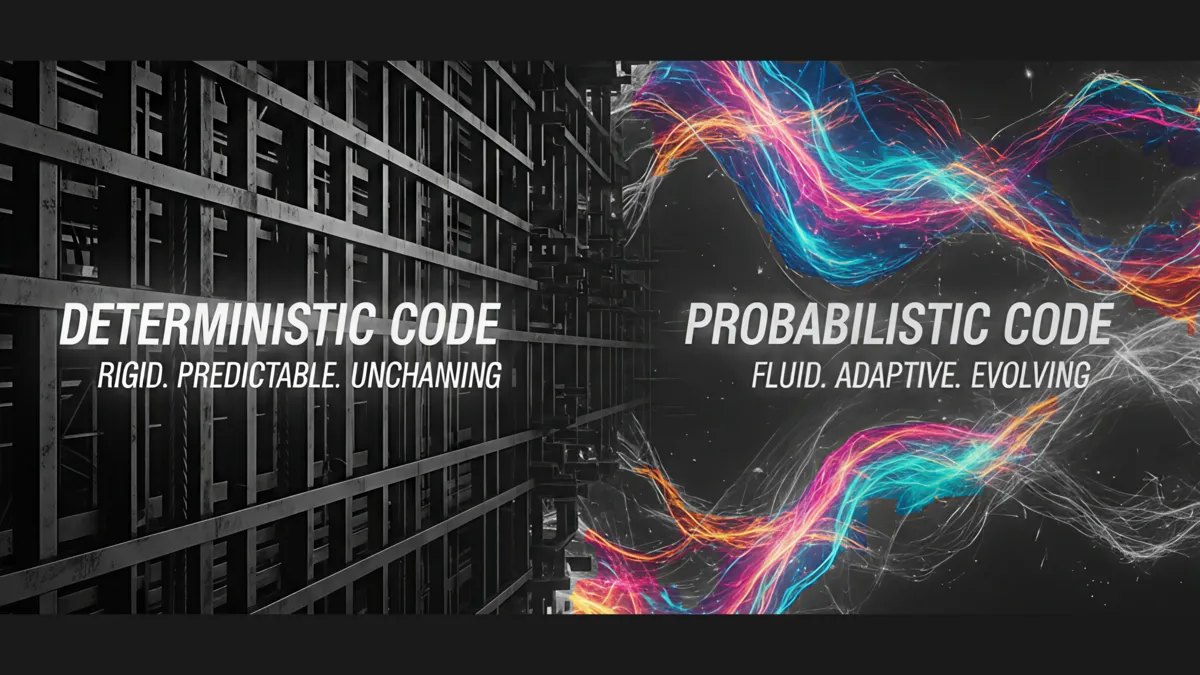

Security processes and logging designed for a deterministic world will not work in a probabilistic one. Traditional systems record what happened after the fact. But AI agents don't produce the same output twice. They lose context mid-task. They delegate to other agents who delegate further — and the original intent gets separated from the final execution by multiple context boundaries.

Agents don't remember. They reconstruct.

Ask any AI agent what it did one second after its context clears and you get a plausible fabrication, not a record. It summarizes what it thinks happened and presents it as fact. Now add delegation — Agent A tells Agent B to do something, Agent B reports back. Ask Agent A what the agreement was and you get Agent A's interpretation of Agent B's summary of a conversation that no longer exists in either context window.

Without structure, the only way to trust agents is to lock them down — rigid workflows, narrow permissions, hard-coded guardrails. You make them safe by making them limited. There's a better way.

Track intent from the start. If you bolt on accountability after the fact, you're back to reconstruction.

This is coming for every business:

of Fortune 500 now run active AI agents

Aug 2026

EU AI Act full enforcement

of leaders cite accountability and auditability as top agent concern

Article 12 of the EU AI Act requires automatic, structured, tamper-evident event logging for high-risk AI systems. Not optional. Not best-effort. Most companies plan to retrofit logging after the fact. For AI agents, that doesn't work.

Sources: Microsoft, KPMG AI Pulse Survey, EU Commission

What we do

Development, deployment, and governance—the full stack of building AI systems that enterprises can trust.

What we've built

We don't just advise. We ship.

“If you bolt on accountability after the fact, you're back to reconstruction.”

Michael Cooper

Founder & Principal, Tributary AI

Builder of AGLedger (accountability infrastructure for AI agents) and ChipClock (poker tournament SaaS). Nearly 30 years navigating enterprise technology transitions at Microsoft, Citrix, Simplot, and Micron. Hundreds of hours building production systems with Claude, Gemini, and Codex.