AI Isn't One Thing: A Decision Framework for LLMs, RPA, and ML

A mid-market manufacturer spent $180K on an LLM-powered "AI assistant" for their procurement team. Six months later, they ripped it out—hallucinated supplier data had corrupted three contracts. The actual problem? Structured invoice matching. A $60K RPA solution would have worked perfectly.

The Conflation Problem

"AI" has become the most meaningless word in enterprise technology.

Vendors slap it on everything from simple if-then workflow rules to cutting-edge large language models. Your invoice processing automation? AI. Your spam filter? AI. ChatGPT generating your marketing copy? Also AI.

The conflation isn't accidental. When everything is "AI," vendors can charge AI prices for technology that's been around for decades. And buyers—especially at mid-market companies without dedicated ML teams—can't tell the difference.

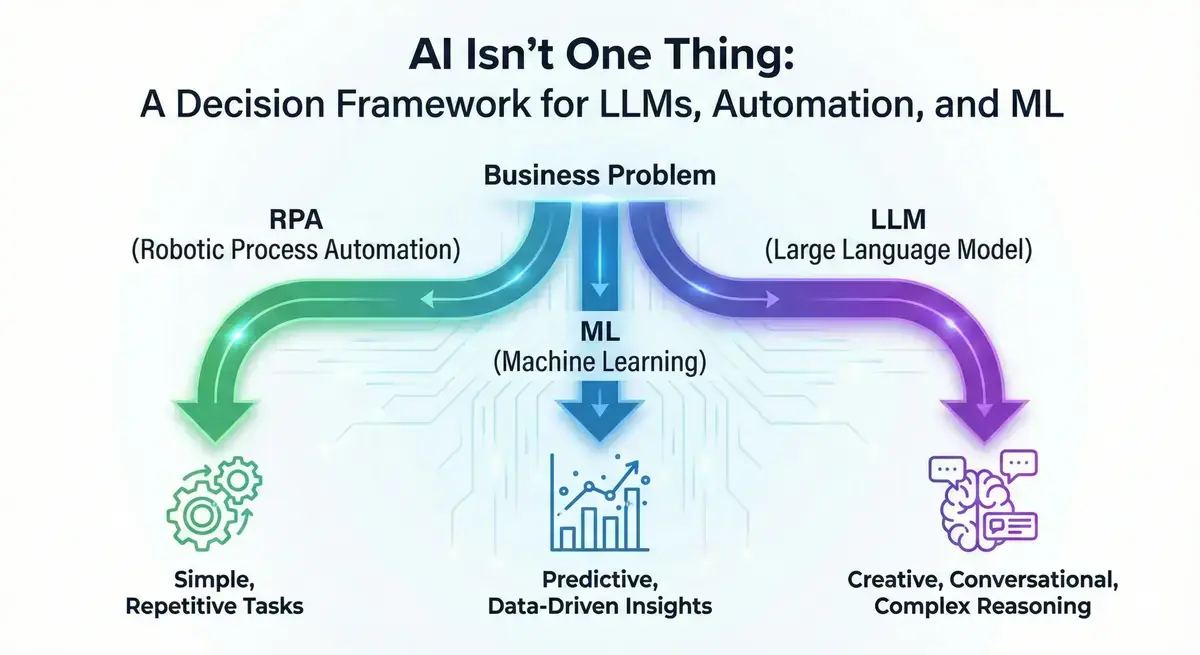

Here's the problem: These technologies solve fundamentally different problems at dramatically different costs. Process automation follows rules you define. Machine learning finds patterns in your historical data. Large language models reason about language and unstructured information. Choosing the wrong one doesn't just waste money—it creates the wrong expectations, builds the wrong infrastructure, and often fails to solve the actual problem.

Before you buy "AI," you need to understand what you're actually buying.

This post provides a practical framework for matching your real business problems to the right technology—not the technology your vendor happens to be selling this quarter.

The AI Technology Spectrum

Let's cut through the marketing and define what these technologies actually do.

Process Automation (RPA and Workflow Engines)

What it is: Rule-based automation that follows predefined steps. You tell it exactly what to do: "When an invoice arrives, extract the vendor name from field X, check it against the approved vendor list, route to the appropriate approver based on amount thresholds."

Best for: High-volume, repetitive, structured tasks with clear rules and consistent inputs.

Real examples:

- Invoice processing and accounts payable

- Employee onboarding data entry

- Report generation and distribution

- Order entry from structured forms

Strengths: Fast execution (milliseconds, not seconds). Reliable—it does exactly what you tell it. Fully auditable. Low cost per transaction at scale.

Limitations: Brittle. Change your form layout and the bot breaks. Can't handle exceptions or novel situations. Maintenance-heavy—expect 20-30% of initial implementation cost annually just to keep it running as your systems evolve.

Cost profile: $50-200K implementation, $20-50K annual maintenance. Lower upfront than ML or LLMs, but those maintenance costs compound.

When to use it: You have a process that's manual, repetitive, follows clear rules, and your inputs are structured and consistent. The ROI math is simple: if you're spending $500K/year on manual data entry, $150K for RPA is obvious.

Machine Learning (Traditional ML/Predictive Models)

What it is: Statistical models trained on your historical data to predict future outcomes. You show it thousands of examples of customers who churned (and didn't), and it learns to predict which current customers are at risk.

Best for: Classification, prediction, and pattern recognition where you have labeled historical data.

Real examples:

- Customer churn prediction

- Demand forecasting

- Fraud detection

- Lead scoring

- Predictive maintenance

Strengths: Highly accurate for well-defined problems with good data. Results are explainable—you can understand why the model made a prediction. Decades of proven ROI in specific use cases.

Limitations: Requires clean, labeled data (which you probably don't have). Needs domain expertise to build and validate. Degrades over time as patterns shift—"model drift" requires ongoing monitoring and retraining.

Cost profile: $100-500K initial investment (heavily weighted toward data preparation and cleaning), $50-100K annual for maintenance, monitoring, and retraining.

When to use it: You have a specific prediction problem ("Which customers will churn?" "How much inventory do we need next month?") and you have historical data that captures the patterns you want to learn. If you don't have the data, ML isn't magic—it's statistics. Understanding why AI projects fail due to data architecture can help you assess ML readiness.

Large Language Models (LLMs/Generative AI)

What it is: Neural networks trained on vast amounts of text data, capable of understanding, generating, and reasoning about language. Unlike ML, they can handle tasks they weren't explicitly trained for—"few-shot" or "zero-shot" learning.

Best for: Unstructured data, natural language interaction, complex reasoning, and content generation.

Real examples:

- Customer service chatbots with genuine comprehension

- Document analysis and summarization

- Knowledge synthesis across sources

- Content generation (with human review)

- Code assistance and explanation

Strengths: Remarkable flexibility. Can process documents, emails, contracts—anything with language. Can reason about novel situations. Handles the ambiguity that breaks rule-based systems.

Limitations: Hallucination is real and unavoidable with current architectures. High latency (seconds, not milliseconds). High cost per token that scales with usage. Outputs are probabilistic, not deterministic—the same input can produce different outputs.

Cost profile: Variable and often surprising. API costs of $5K-50K+ per month depending on volume, model choice, and prompt complexity. Plus ongoing costs for prompt engineering, output monitoring, and the integration work to make them useful.

When to use it: You need to process genuinely unstructured data, generate human-like content, or handle tasks that require language understanding. Not because it's trendy—because you've determined that the alternatives can't handle your problem.

The Decision Matrix

Here's the practical framework. When you have a problem, this tells you where to start:

| If Your Problem Is... | Consider First | Consider Second | Avoid |

|---|---|---|---|

| High-volume, rule-based tasks | RPA | ML (for handling exceptions) | LLMs (expensive overkill) |

| Predicting future outcomes from historical data | ML | — | LLMs (wrong tool entirely) |

| Processing unstructured documents | LLMs | ML (for classification steps) | RPA (fundamentally can't handle it) |

| Customer-facing natural language | LLMs | — | RPA (terrible user experience) |

| Mission-critical, audit-required processes | RPA or ML | LLMs with human-in-the-loop | Autonomous LLMs |

| Complex reasoning under uncertainty | LLMs with human-in-the-loop | — | RPA (not capable) |

| Exceptions and edge cases in automated flows | LLMs (as escalation layer) | Human review | More complex RPA rules |

The key insight most vendors won't tell you: For most mid-market companies, the right order is process automation first, ML for specific prediction problems second, and LLMs for genuine unstructured reasoning third.

The reverse order—buying LLMs first because they're exciting—is almost always wrong.

LLMs are powerful, but they're expensive, unpredictable, and require significant infrastructure to deploy safely. If your actual problem is "we manually enter data from structured forms," you don't need a large language model. You need RPA. And if your problem is "we need to predict which customers will churn," you don't need generative AI. You need a proper ML model trained on your data.

The technology that fits your problem is the right choice. What's trending is noise.

Ready to assess your organization's AI readiness? The Assessment evaluates your technology, data, people, and processes to identify what's blocking your AI success. Schedule your assessment →

The Hallucination Reality Check

LLMs make things up. This isn't a bug that will be fixed in the next release. Hallucination is a fundamental characteristic of how current LLM architectures work—they predict plausible text, not retrieve verified facts. Hallucination rates vary significantly by model and task type — even the best models produce unreliable outputs in specialized domains, while smaller or older models are substantially worse. The specific rate depends heavily on your use case and data.

Whatever the aggregate rate, domain-specific reality is far worse:

- Legal applications: Stanford HAI research found legal AI tools hallucinated case citations at alarmingly high rates—confidently citing cases that don't exist or are irrelevant.

- Medical applications: Research in Nature Digital Medicine found hallucination rates for clinical information were unacceptably high, depending on the model and use case.

- Production deployments: The majority of enterprises now require human-in-the-loop verification for LLM outputs. They've learned the hard way.

Knowledge workers report spending significant time fact-checking AI-generated content — time that compounds across an organization.

The key question: Can you afford the cost of errors?

When LLMs are still worth the risk:

- Cost of human labor to do the task > cost of errors + cost of verification

- Errors are embarrassing but not catastrophic

- You have robust review processes already in place

- The task is genuinely unstructured and alternatives don't work

When LLMs are too risky:

- Regulated industries with compliance requirements

- Legal or financial decisions with liability exposure

- Mission-critical processes where errors cascade

- Situations where you can't afford a human-in-the-loop

The vendors selling you LLM solutions have every incentive to downplay hallucination risk. You don't have that luxury. Establishing an AI governance framework helps manage these risks systematically.

The Cost Reality Check

The LLM market is projected to grow from $4.5 billion (2023) to over $80 billion by 2033 (Precedence Research). McKinsey's State of AI report shows GenAI adoption jumping from 33% to 65% in a single year. That growth is real—but so are the costs that enterprise buyers are discovering.

Rough cost ranges for mid-market implementations:

| Technology | Initial Investment | Annual Ongoing |

|---|---|---|

| Process Automation (RPA) | $50-200K | $20-50K |

| Machine Learning | $100-500K | $50-100K |

| Large Language Models | $50-150K + variable API | $60-600K+ |

These ranges depend on implementation complexity, data readiness, and integration requirements. A greenfield ML project with clean data sits at the low end; retrofitting ML into legacy ERP systems with fragmented data pushes toward the high end.

LLM API costs scale with usage in ways that surprise finance teams. A customer service bot handling 10,000 queries per month might cost $5K. Scale to 100,000 queries and you're at $50K. Add document processing, longer contexts, or more sophisticated prompting, and costs climb further.

Hidden costs everyone underestimates:

- Integration with existing systems (often 50% of total project cost)

- Training and change management

- Prompt engineering and optimization (this is a real job now)

- Data pipeline maintenance

- Security and compliance review

- Monitoring and observability infrastructure

The question that actually matters: What's the cost of the problem you're solving?

If a manual process costs you $800K/year in labor and errors, then $200K for ML that cuts that by 60% is a strong ROI. If you're spending $100K/year on a process and a vendor quotes you $150K for an AI solution, the math doesn't work regardless of how impressive the demo is.

Don't let technology excitement override basic financial analysis. The unsexy solution that delivers 3x ROI beats the exciting solution that delivers 0.5x.

Seven Questions to Ask Before You Buy

Before you sign a contract for any "AI" solution, get clear answers to these questions:

1. Is this a structured or unstructured problem? If your inputs follow predictable formats, you probably don't need LLMs. If they're genuinely unstructured—free-form text, varied documents, natural conversation—LLMs might be the right tool.

2. Do we have historical data to learn from? ML requires training data. If you don't have it, or it's not labeled, or it doesn't represent the problem you're trying to solve, ML won't work. No amount of vendor promises changes this.

3. What's our tolerance for errors? Be specific. "Low tolerance" isn't an answer. What happens when the system makes a mistake? What's the cost? This determines whether autonomous AI or human-in-the-loop is appropriate.

4. Is this mission-critical or experimental? Experimental use cases can tolerate more risk and less reliability. Mission-critical processes need proven technology with fallback options.

5. Can we afford human-in-the-loop verification? If LLM outputs require human review, you need to staff and budget for that review. Factor it into ROI calculations.

6. What's the volume of transactions or queries? High volume favors automation and ML. Low volume might not justify any automation investment. Variable volume makes LLM API costs unpredictable.

7. Do we have internal expertise to maintain this? Every AI solution requires ongoing maintenance. Who's going to monitor performance, retrain models, update prompts, fix integrations when systems change? If the answer is "the vendor," understand what that costs long-term.

Conclusion: "AI" Is a Category, Not a Solution

The vendors calling everything "AI" aren't lying—they're just not helping you make good decisions. Rule-based automation, statistical machine learning, and large language models all qualify as artificial intelligence in some sense. But they solve different problems, cost different amounts, and carry different risks.

Your job isn't to buy "AI." Your job is to solve business problems. Sometimes that means RPA. Sometimes that means ML. Sometimes that means LLMs. Often it means a combination—RPA for the structured workflow, ML for predictions, LLMs for the exceptions that require reasoning. The key is starting with business outcomes, not technology trends.

The vendors won't help you make this distinction because they want to sell you whatever they're selling this quarter. The consultants pushing "AI transformation" have their own incentives. You need to understand the landscape well enough to make the right call.

A note on agentic AI: LLMs orchestrating multi-step workflows autonomously—"agentic AI"—is an emerging category that combines LLM reasoning with automation. The cost and risk profiles are higher than standalone LLM use cases, and governance requirements are more demanding. For most mid-market companies in 2026, this is a "watch closely, deploy cautiously" technology.

What we do at Tributary: Our two-week Assessment evaluates your actual business problems, maps them to appropriate technologies, and tells you what's worth building versus what's vendor hype. You get a prioritized roadmap and clear recommendations—not a deck of buzzwords. We don't sell AI tools. We help you avoid buying the wrong ones.

Because the most expensive AI investment is the one that solves the wrong problem.

Take the Next Step

Choosing the right AI technology for your business problem can save you hundreds of thousands of dollars and months of wasted effort. Tributary helps mid-market companies navigate AI implementation with clarity and confidence.

Take our free AI Readiness Assessment → to discover which AI technologies match your needs, or schedule a Strategic Assessment to get a clear-eyed analysis of where to invest—and where not to.

Ready to Put This Into Practice?

Take our free 5-minute assessment to see where your organization stands, or talk to us about your situation.

Not ready to talk? Stay in the loop.

Get AI strategy insights for mid-market leaders — no spam, unsubscribe anytime.

Related Posts

View all posts