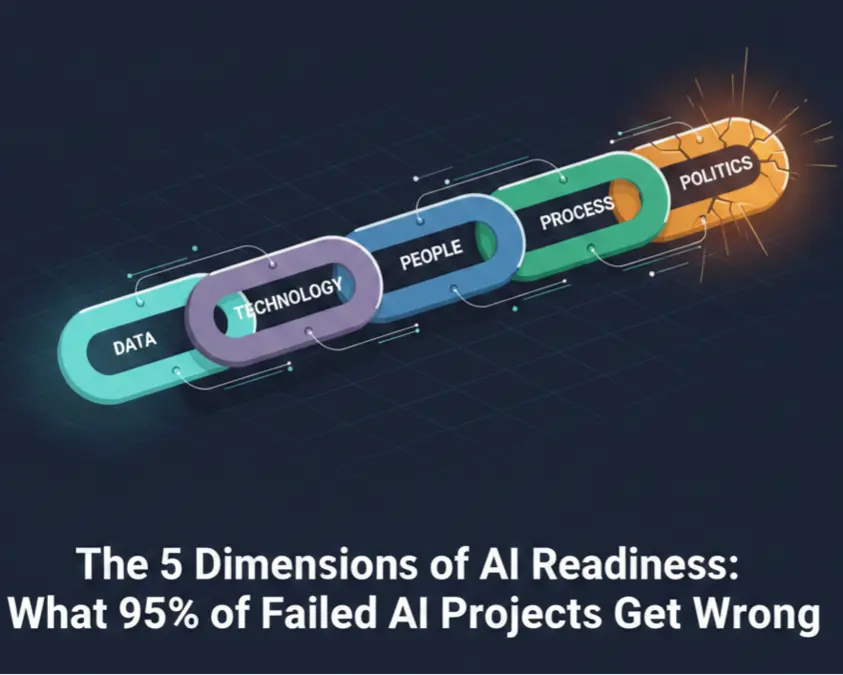

The 5 Dimensions of AI Readiness: What 95% of Failed AI Projects Get Wrong

Why most organizations sabotage their AI initiatives before writing a single line of code

Here's a number that should stop every executive mid-pitch: 95% of enterprise generative AI initiatives show no measurable P&L impact.

That's not a typo. Ninety-five percent. According to MIT's 2025 research, nearly every generative AI project that gets funded, staffed, and celebrated in company newsletters fails to move the needle on actual business results.

The usual explanation? "The technology wasn't ready" or "We need better AI tools."

But that explanation is wrong. And organizations that believe it are about to waste another year and another seven-figure budget learning the same lesson.

The real reason most AI projects fail has almost nothing to do with the technology. It has everything to do with what organizations refuse to measure before they start.

The Uncomfortable Pattern Behind AI Failure

We've been here before.

Cloud migrations that stalled. Digital transformation initiatives that became digital sprawl. ERP implementations that cost three times the budget and delivered half the value. The technology changed; the failure pattern didn't.

And now it's AI's turn.

BCG's research reveals what most vendors won't tell you: 70% of AI implementation challenges relate to people and processes, not technology. The algorithms work. The models are powerful. The platforms are mature. What breaks is everything around them.

Consider the pattern:

- Few organizations — typically fewer than one in five — have data quality that's genuinely AI-ready

- The vast majority struggle with data silos that prevent AI from accessing the information it needs

- More than half lack the AI talent and skills to implement and maintain AI systems

- Over half cite outdated processes as their biggest barrier to AI adoption

- The vast majority of AI proofs-of-concept fail to transition to production

That last statistic deserves emphasis. Nearly nine out of ten AI pilots that "succeed" in the lab never make it to production deployment. The pilot works. The rollout fails. The organization blames the technology and moves on to the next vendor.

But the technology wasn't the problem. The organization wasn't ready.

Why "AI Readiness" Isn't About Technology

Most AI readiness assessments focus on technical infrastructure. Do you have GPUs? Is your data in the cloud? Can your systems handle machine learning workloads?

These questions matter. But they're insufficient. Fewer than a third of organizations have robust GPU infrastructure for AI, yet that's rarely what kills AI projects.

What kills AI projects is attempting to deploy intelligent automation into organizations that can't handle unintelligent automation. It's asking AI to optimize processes that no one has documented. It's expecting data-driven insights from data that lives in thirty disconnected spreadsheets across five departments that don't talk to each other.

AI doesn't fix organizational dysfunction. It amplifies it.

A poorly documented process becomes a poorly automated process that makes mistakes at machine speed. Fragmented data becomes fragmented AI outputs that no one trusts. Misaligned executives become competing AI initiatives that duplicate costs and confuse employees.

The organizations that succeed with AI aren't the ones with the best technology. They're the ones that did the hard work of getting ready before they invested in AI tools.

The Five Dimensions of AI Readiness

After synthesizing research from industry leaders—McKinsey, BCG, Gartner, MIT, Deloitte, and others—we've identified five dimensions that predict AI success or failure. Miss any one of them, and your AI investment is at risk.

Dimension 1: Data (Weight: 25%)

The Foundation Everything Else Builds On

Every AI system is only as good as the data that feeds it. This isn't news. But most organizations dramatically underestimate what "AI-ready data" actually requires.

The numbers are brutal:

- Few organizations — typically fewer than one in five — have data quality that's genuinely AI-ready

- The vast majority struggle with data silos—the same information lives in multiple systems with different values

- More than half say integration difficulties have derailed their AI outcomes

What breaks:

- Data silos: The same customer exists in Salesforce, SAP, HubSpot, and Zendesk—with different addresses, different contact names, and different account IDs in each system. When AI asks "Who is this customer?", there's no consistent answer.

- Poor data quality: AI models don't fix bad data; they scale it. If 15% of your customer records have incorrect information, your AI will make decisions based on that incorrect information—faster and at greater scale than humans ever could.

- Lack of governance: Nobody knows who owns critical datasets. When something's wrong, there's no one to fix it. When there's a question, there's no one to answer it.

The diagnostic question: If your organization wanted to train an AI model on your historical data tomorrow, what would happen?

If the honest answer is "We'd spend months just locating and cleaning the data," you're not ready for AI. You're ready for a data architecture project. Learn more about why AI projects fail due to data architecture issues and how to fix them.

Dimension 2: Technology (Weight: 20%)

Infrastructure That Enables or Blocks

Technology matters—but not in the way most organizations think. The question isn't "Do you have the latest AI platform?" It's "Can your existing systems support AI integration?"

The reality check:

- Fewer than a third of organizations have robust GPU infrastructure for AI workloads

- The overwhelming majority cite legacy system constraints blocking AI adoption

- Nearly all cite data integration as a significant technical challenge

- A substantial share of Fortune 500 enterprise software is more than 20 years old

What breaks:

- Legacy system constraints: That critical system from 2008 contains irreplaceable historical data. The only way to extract it is a manual CSV export that takes three days and requires someone who retired two years ago. AI can't work with that.

- No API access: If your core systems can't talk to each other programmatically, they can't talk to AI either. Manual data movement doesn't scale.

- MLOps immaturity: Building an AI model is one thing. Deploying it, monitoring it, versioning it, and maintaining it in production is another. Fewer than one in five organizations achieve enterprise-scale AI deployment.

The diagnostic question: If someone asked "How many active customers do we have?", what would happen?

If different systems would give different answers, and someone would need to spend hours reconciling data from multiple sources, your technology foundation isn't ready for AI.

Dimension 3: People (Weight: 20%)

Skills, Understanding, and Willingness

AI is implemented by people, used by people, and—critically—resisted by people. The human dimension of AI readiness is consistently underestimated.

The talent gap is widening:

- More than half of organizations lack AI talent and skills

- The majority cite the skills gap as their primary challenge

- The AI skills shortage has grown dramatically over the past two years

- Very few organizations have begun meaningfully upskilling their workforce

But talent isn't the only people problem:

- The majority of workers worry about job loss from AI

- Nearly half of workers even at AI-advanced organizations worry about job security

- Most mid-market employees feel unprepared for AI

- Organizations with aligned, adaptable cultures consistently outperform peers on revenue growth

What breaks:

- Leadership gap: Almost no executives rate their organization as "mature" on AI deployment. When leaders don't understand AI capabilities and limitations, they set unrealistic expectations, approve wrong projects, and lose credibility when initiatives fail.

- Employee resistance: People who fear replacement don't adopt new tools—they quietly sabotage them. Every "the AI got it wrong" complaint that leads to reverting to manual processes is a small victory for change resistance.

- Skills mismatch: You can't implement AI with people who don't understand AI. And you can't train people on AI when they're worried about whether AI will take their jobs.

The diagnostic question: How would employees describe their feelings about AI's impact on their roles?

If the honest answer involves fear, anxiety, or skepticism, your people dimension needs attention before any AI initiative can succeed. Understanding why employees fear AI and how to turn them into advocates is essential for addressing this dimension.

Dimension 4: Process (Weight: 15%)

The Operational Backbone AI Depends On

AI automates processes. If your processes are undefined, inconsistent, or exist only in people's heads, AI has nothing to automate—or, worse, it automates chaos.

The documentation problem is universal:

- Over half of organizations cite outdated processes as their biggest AI barrier

- Few workflows are well-documented enough for AI to learn from

- Most organizations struggle with cultural and structural barriers to change

- The vast majority of AI POCs fail to transition to production

What breaks:

- Undocumented processes: When a new employee asks "How does X work here?", the answer is "Ask Sarah" or "It depends." If humans can't explain the process, AI can't learn it.

- Inconsistent execution: The same task is done differently by different team members on different days. AI trained on inconsistent processes produces inconsistent outputs—and then gets blamed for being unreliable.

- Pilot-to-production gap: AI works beautifully in the controlled environment of a pilot. Then it encounters real-world variations, exceptions, and edge cases that no one documented. The pilot "succeeded." The rollout failed.

The diagnostic question: What percentage of your core business processes are formally documented, and when were they last updated?

If documentation is sparse, outdated, or stored in people's heads, you need process work before AI work. For organizations ready to move forward despite imperfect processes, running a proof of concept that actually proves something can help validate business viability.

Ready to assess your organization's AI readiness? The Assessment evaluates your technology, data, people, and processes to identify what's blocking your AI success. Schedule your assessment →

Dimension 5: Politics (Weight: 10%)

The Hidden Dimension That Kills More Projects Than Bad Technology

This is the dimension most assessments ignore. It's also the dimension that explains why technically sound AI projects with adequate budgets and talented teams still fail.

The political reality:

- McKinsey finds that 70% of change initiatives fail due to employee pushback

- Nearly all organizations need better AI governance

- A significant share of AI leaders cite cross-functional collaboration as their biggest unmet need

- Leaders tasked with governing AI often understand it least

What breaks:

- Lack of executive sponsorship: The executive "supports" AI but delegates all decisions to subordinates. When conflicts arise, when budgets compete, when departments resist, there's no one with authority and commitment to push through.

- Departmental silos and turf protection: Marketing won't share data with Sales because "that's our customer data." Operations won't let IT touch their systems because "we know what we need." Every department protects its territory, and AI—which requires cross-functional data and collaboration—becomes the casualty.

- Historical failures and organizational trauma: "We tried something like this before." The scars from failed ERP implementations, botched digital transformations, and abandoned technology initiatives create organizational antibodies that attack new projects. Staff become cynical. Sponsors become cautious. Projects die slow deaths in committee.

- Decision-making paralysis: AI proposals cycle through committees without resolution. Pilots stay in "pilot purgatory" because no one has authority—or willingness—to make the production decision.

The diagnostic question: What happened with your last major technology or process initiative?

If it failed, if it left scars, if people are still wary—that organizational trauma will shape your AI initiative's fate more than any technology choice. Building an AI governance framework can help establish trust and accountability that addresses these political challenges.

Why Politics Is Your Secret Weapon

Here's the counterintuitive insight: the Politics dimension, despite being weighted at only 10%, may be the most important dimension to assess.

Why? Because political problems can veto technical success.

You can have excellent data, modern technology, skilled people, and documented processes. If your executives aren't aligned, if your departments won't collaborate, if your organization is still nursing wounds from past failures—none of the technical excellence matters.

Politics kills more AI initiatives than bad algorithms.

This is why most assessments fail. They measure what's measurable—data quality scores, technology audits, skills inventories. They ignore what's uncomfortable—executive alignment, departmental turf wars, organizational trauma.

An assessment that doesn't probe the political dimension is an assessment that will miss the most common cause of AI failure.

The Veto Threshold: Why Partial Readiness Isn't Readiness

One critical insight from our research: AI readiness isn't additive. You can't compensate for a critical weakness in one dimension with strength in others.

Think of it like a chain. A chain with five strong links and one weak link breaks at the weak link. An organization with strong data, technology, people, and processes—but toxic politics—will fail at the political link.

This means any assessment must include a "veto threshold." If any single dimension falls below a critical level, the overall result should reflect that vulnerability, regardless of how strong other dimensions appear.

An organization scoring 80% overall but 25% on Politics isn't "mostly ready." It's at serious risk of failure—and the risk is hiding in the dimension no one wants to discuss.

How to Assess Your Organization's Readiness

Before investing in AI tools, platforms, or consultants, answer these questions honestly:

Data:

- Can you trace where your data comes from and how it's transformed?

- Do you have documented ownership for your critical datasets?

- How long does it take a data scientist to access the data they need?

Technology:

- What percentage of your critical systems are accessible via APIs?

- Can your infrastructure support real-time data processing?

- Have you successfully scaled an AI pilot to production? What were the challenges?

People:

- What percentage of your workforce has received formal AI training?

- How would you characterize employee sentiment toward AI adoption?

- Can your leadership team articulate which AI tools fit which problems?

Process:

- What percentage of your core processes are formally documented?

- When you need to modify a process, what happens?

- Have you attempted AI pilots? What happened after the pilot phase?

Politics:

- How aligned is your executive team on AI priorities?

- When initiatives require cross-departmental cooperation, how smoothly does it work?

- What happened with your last major technology initiative?

If you answered honestly and found gaps—that's valuable. Those gaps are where AI investments go to die. Address them first.

The Path Forward

The 95% failure rate isn't a technology problem. It's a readiness problem.

Organizations that succeed with AI aren't lucky. They're prepared. They did the unglamorous work of:

- Unifying their data before asking AI to analyze it

- Documenting their processes before asking AI to automate them

- Aligning their executives before asking for AI budgets

- Addressing their people's fears before asking them to adopt AI tools

- Acknowledging their political realities before pretending they don't exist

This work isn't exciting. It doesn't make for compelling vendor demos or conference keynotes. But it's the difference between the 95% that fail and the 5% that succeed.

The fastest path to AI value isn't buying the best AI tools. It's building the organizational foundation that lets any AI tools work.

Take the Next Step

The 95% failure rate is not a technology problem—it is a readiness problem. Understanding where you stand across all five dimensions is the first step toward AI success. Tributary helps mid-market companies navigate AI implementation with clarity and confidence.

Take our free AI Readiness Assessment → to get an immediate score breakdown showing your strengths and vulnerabilities, or schedule a Strategic Assessment for an expert, objective diagnostic of your organization's true readiness.

Ready to Put This Into Practice?

Take our free 5-minute assessment to see where your organization stands, or talk to us about your situation.

Not ready to talk? Stay in the loop.

Get AI strategy insights for mid-market leaders — no spam, unsubscribe anytime.

Related Posts

View all posts